|

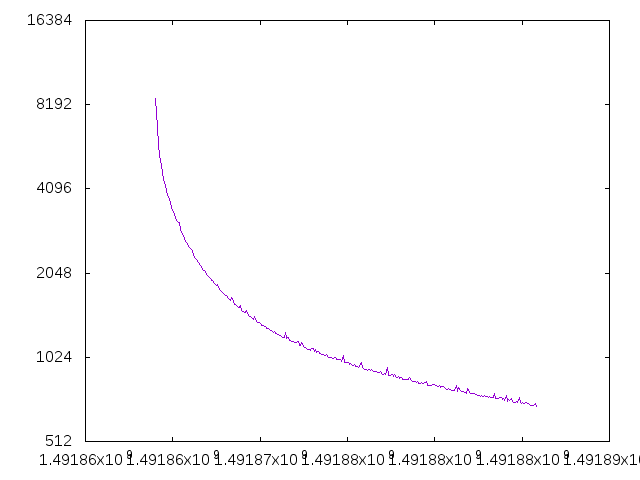

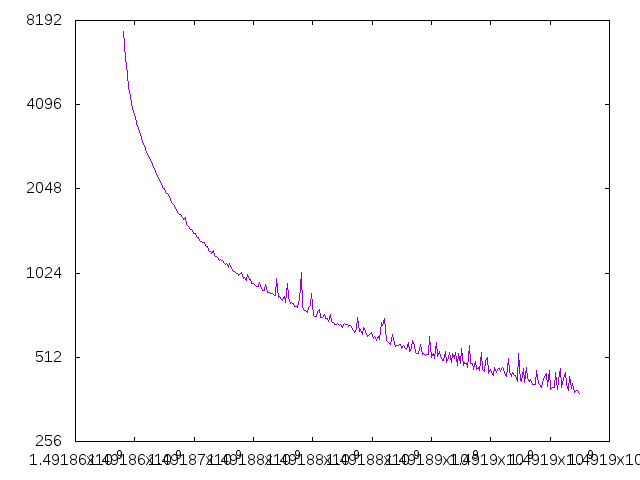

A quick post about the results for my first comparison here of a 2-layer fully connected network vs a DagNN. I've removed most of the random variables here for this example so that the comparison is pretty accurate. The only random variable left is the order in which things are trained due to SGD - however, as I removed more and more random variables the differences got more in favor of DagNN and not less. The conclusion of this test is that DagNN is better node-for-node per epoch than the standard 2-layer fully connected network - at least in this example. This at least follows intuition a bit, that more weights between the same number of nodes increases overall computational power of the network.

More rigorous comparisons in some of the standard test cases needs to be done, but this is a good first step offering some preliminary credibility.

1 Comment

|

Archives

November 2021

Categories |

RSS Feed

RSS Feed